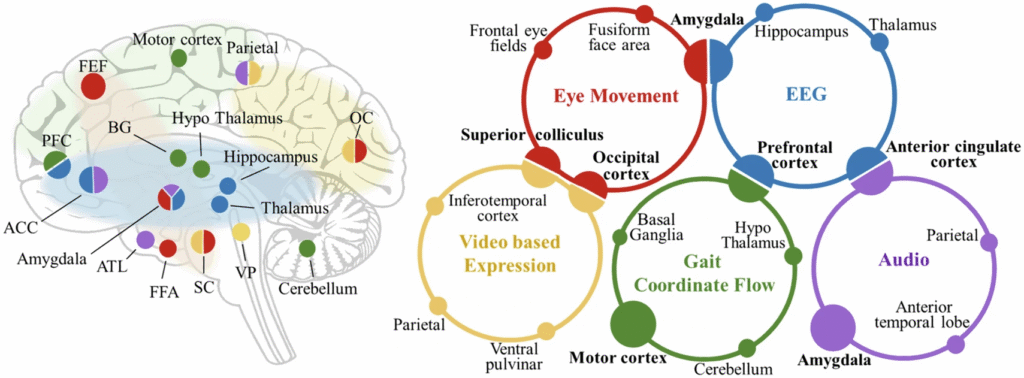

Brain activation patterns in depression across key functional domains, including altered emotional processing, negative attentional bias, delayed auditory responses, and impaired sensorimotor-emotional integration. BG Basal Ganglia, ATL Anterior Temporal Lobe, FFA Fusiform Face Area, VP Ventral Pallidum, SC Superior Colliculus, OC Occipital Cortex.

“AI-assisted multi-modal information for the screening of depression: a systematic review and meta-analysis”:

https://www.nature.com/articles/s41746-025-01933-3

Background

- Depression is one of the most common and disabling mental health conditions worldwide.

- Traditional screening relies on self-report questionnaires and clinical interviews, which can be subjective, time-consuming, and prone to under-detection.

- AI-assisted approaches using multi-modal data (e.g., voice, facial expression, EEG, heart rate, text, and behavior) are emerging as promising tools for more objective and scalable screening.

Methods

- The authors conducted a systematic review and meta-analysis of published studies applying AI to depression screening.

- Modalities included:

- Physiological: EEG, heart rate variability, neuroimaging signals.

- Behavioral: speech, facial expression, eye-tracking, gait, smartphone usage.

- Multi-modal fusion: combining two or more data streams.

- Extracted and pooled classification performance metrics: accuracy, sensitivity, specificity, AUC.

Key Findings

- Overall performance: AI-assisted methods showed good discriminatory ability for identifying depression.

- Multi-modal models consistently outperformed single-modality approaches.

- EEG + behavioral data combinations yielded the highest accuracy.

- Voice and facial expression analysis were particularly promising for non-invasive, real-world screening.

- Heterogeneity: Results varied depending on dataset size, feature extraction, and AI algorithms.

- Limitations: Many studies had small samples, lacked external validation, and risked overfitting.

Implications

- AI-assisted multi-modal screening could:

- Enable early detection of depression in both clinical and community settings.

- Provide objective, scalable, and low-burden tools to complement clinician judgment.

- Reduce reliance on self-report measures.

- To move toward clinical translation, the field needs:

- Larger, diverse, and representative datasets.

- Standardized protocols for data collection and model evaluation.

- Ethical safeguards for privacy, bias, and transparency.

- Regulatory validation before deployment.

Conclusion

AI-assisted multi-modal information is a promising frontier for depression screening.

- Fusion models (combining physiological and behavioral data) show the strongest potential.

- However, the field is still in its early stages, and robust validation is essential before integration into routine practice.

Content created by Copilot GPT-5